Certainly it was a misunderstanding on my behalf, as I had used PGMPY previously and it did exactly what I needed (for prototyping) by simply creating the dynamic network, adding the evidence and running it. This does not happens in PYSMILE, as evidence in

t+n can affect beliefs in

t, but it is closer to what I will need to do later: restore the net from the DB, initialize with previous status (ANCHOR), add evidences (PLATE), gather results (TERMINAL), and store the results in the DB.

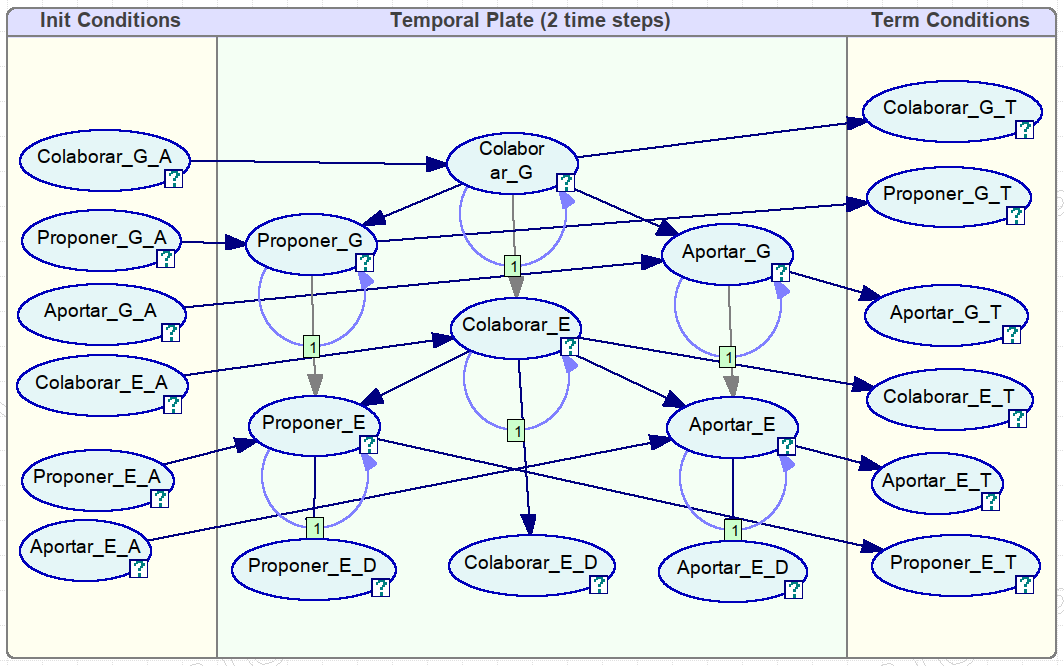

Now my prototype seems to be working for the net shown in the picture below:

- colaborar_din_genie.png (68.97 KiB) Viewed 18175 times

Given the evidence

Code: Select all

cn = CollaborationNetwork()

cn.add_evidence(cn.evidence_name(cn.PROPONER_E),1,cn.BAJO)

cn.add_evidence(cn.evidence_name(cn.APORTAR_E),2,cn.BAJO)

cn.skip_evidence(3)

cn.add_evidence(cn.evidence_name(cn.PROPONER_E),4,cn.MEDIO)

cn.add_evidence(cn.evidence_name(cn.APORTAR_E),5,cn.MEDIO)

cn.skip_evidence(6)

cn.add_evidence(cn.evidence_name(cn.COLABORAR_E),7,cn.MEDIO)

cn.skip_evidence(8)

cn.skip_evidence(9)

cn.add_evidence(cn.evidence_name(cn.PROPONER_E),10,cn.ALTO)

cn.add_evidence(cn.evidence_name(cn.APORTAR_E),11,cn.MEDIO)

cn.skip_evidence(12)

cn.skip_evidence(13)

cn.add_evidence(cn.evidence_name(cn.PROPONER_E),14,cn.MEDIO)

cn.skip_evidence(15)

cn.skip_evidence(16)

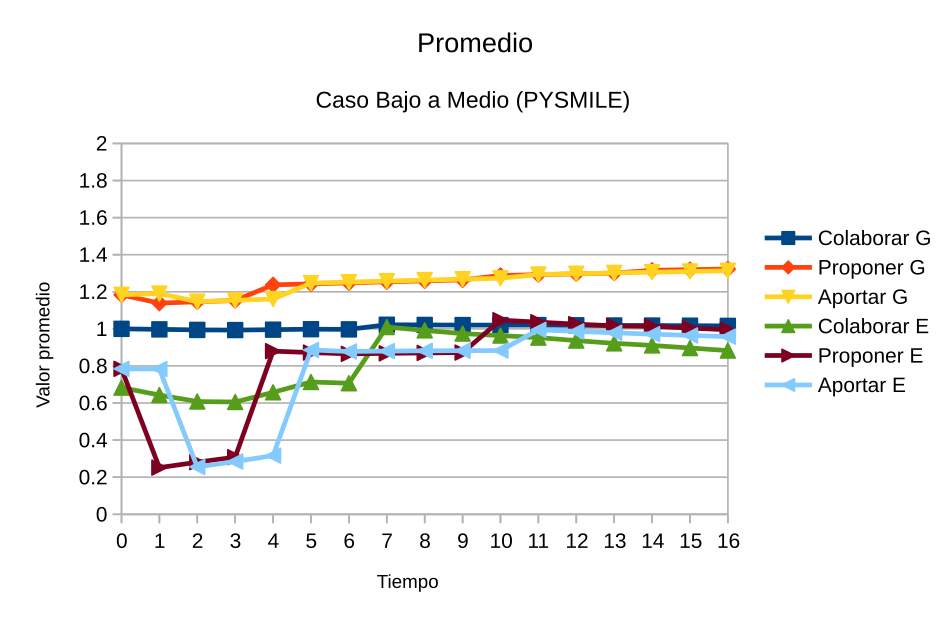

it produces what I was expecting (

Promedio means Average, and

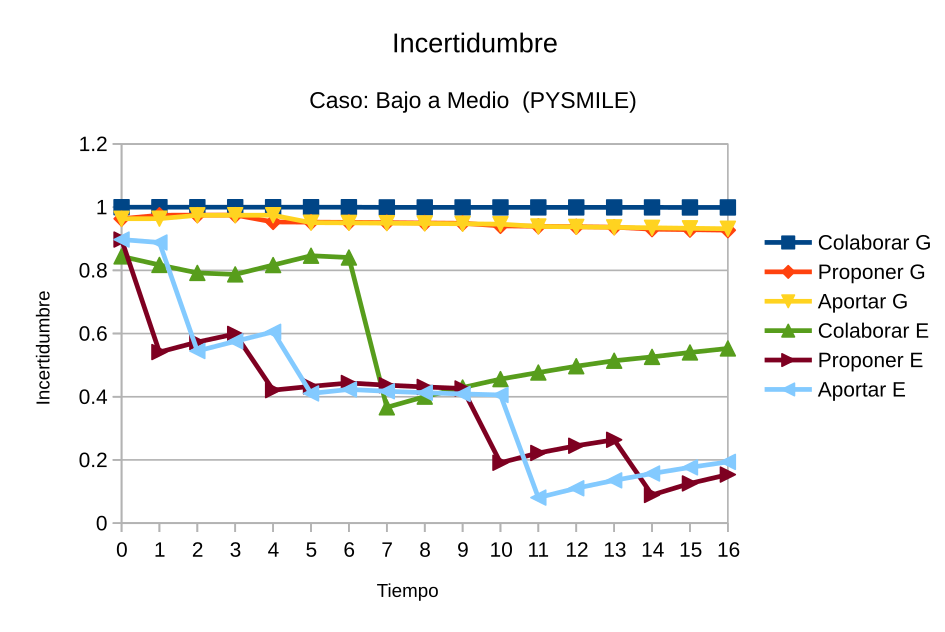

Incertidumbre means Uncertainty, the later calculated as normalised entropy):

- caso-bajoMedio-16-pysmile.png (82.01 KiB) Viewed 18175 times

- caso-bajoMedio-16-incertidumbre-pysmile.png (84.27 KiB) Viewed 18175 times